What Is RAG and Why Does It Matter for Your Business?

Retrieval-Augmented Generation explained in plain English. How RAG lets AI answer questions using your actual business data — and why it's the technology behind the most useful private AI deployments.

You've probably heard someone mention "RAG" in a conversation about AI. Maybe your IT person brought it up. Maybe you read it in a vendor pitch. The acronym stands for Retrieval-Augmented Generation, which tells you almost nothing about what it actually does.

Here's what it means in plain English, why it matters for your business, and how it works in a private AI deployment.

The problem RAG solves

AI models like ChatGPT and Claude are trained on massive datasets of public internet text. They know a lot about the world in general. They know nothing about your business specifically.

Ask ChatGPT about your firm's standard engagement letter terms, your company's approach to change orders, or your practice's prior authorization workflow — and it will either make something up (this is called "hallucination") or give you a generic answer that doesn't reflect how your organization actually operates.

This is the fundamental limitation of general-purpose AI: it doesn't know your data.

RAG fixes this.

How RAG works

RAG is a two-step process that happens behind the scenes every time someone asks the AI a question:

Step 1: Retrieve

Before the AI generates an answer, the system searches your organization's documents — contracts, case files, meeting notes, policies, project records, whatever you've loaded into the knowledge base. It finds the specific documents and passages that are relevant to the question being asked.

Step 2: Generate

The AI then generates its answer using both its general knowledge and the specific documents it just retrieved from your data. The response is grounded in your actual information, not just the AI's training data.

The result: Instead of a generic answer, you get an answer based on your organization's real documents, real history, and real data.

A concrete example

Without RAG, if an attorney at your firm asks the AI: "What was our approach to the tenant improvement clause dispute with the commercial lease client in 2022?"

The AI would say something like: "Tenant improvement clauses typically cover..." — a generic legal textbook answer that isn't helpful.

With RAG, the system first searches your firm's case files, meeting notes, and internal memos. It finds the relevant documents from that specific matter. Then it generates an answer that references your firm's actual approach, the specific arguments you used, and the outcome — citing the source documents so the attorney can verify.

That's the difference between a chatbot and a genuinely useful business tool.

Why RAG matters for private AI

RAG is especially important for private AI deployments because the entire point of private AI is to work with your sensitive data. Here's how it fits:

Your data stays local

In a private deployment, your documents are indexed and stored on your hardware. When the RAG system retrieves relevant documents, it's searching a knowledge base that exists entirely on your machine. No document content is sent to any external server. For law firms, medical practices, and government contractors, this is critical — your most sensitive data is being used to generate answers, and it never leaves your building.

It gets smarter as you add data

Every document you add to the knowledge base makes the system more useful. Case files from five years ago become searchable. Meeting notes that lived in someone's notebook become queryable. Institutional knowledge that used to walk out the door when an employee left becomes permanent and accessible.

It reduces hallucination

RAG dramatically reduces the AI making things up. Because the system retrieves actual documents before generating answers, the responses are grounded in real information. The AI cites its sources, and your team can verify every answer against the original documents.

It works with any document type

Contracts, memos, meeting transcripts, emails, policies, specifications, reports — anything text-based can be added to the knowledge base. The RAG system indexes it and makes it searchable through natural language questions.

RAG in practice: by industry

Law firms

Your firm's entire case history becomes searchable. Any attorney asks a question — "How did we handle the non-compete enforcement issue for the technology client?" — and gets an answer grounded in your actual case files, with citations to the specific documents. Learn more about private AI for law firms.

Medical practices

Clinical protocols, prior authorization templates, referral patterns, and internal procedures become instantly accessible. A medical assistant can ask "What's our process for the Cigna prior auth appeal for MRI?" and get the answer from your actual documentation — on hardware in your office. Learn more about HIPAA-compliant AI.

Construction firms

Historical bid data, project records, subcontractor performance notes, and change order documentation become a searchable knowledge base. An estimator can ask "What did we pay for steel erection on the last three commercial projects in this size range?" and get actual numbers from your records. Learn more about private AI for construction.

Financial advisors

Client communication templates, compliance procedures, investment committee materials, and planning frameworks become queryable. An advisor can ask "What was our recommended allocation strategy for clients in the 55-65 age bracket with $2M+ portfolios?" and get the answer from your actual models and guidelines. Learn more about private AI for financial advisors.

The technical details (simplified)

For the technically curious, here's what's happening under the hood:

Document ingestion: Your documents are loaded into the system. Each document is split into chunks and converted into numerical representations called "embeddings" — essentially, math that captures the meaning of the text.

Vector storage: These embeddings are stored in a vector database on your local hardware. This is the searchable index of your organization's knowledge.

Query processing: When a user asks a question, their question is also converted into an embedding. The system compares this against all stored embeddings to find the most relevant document chunks.

Context injection: The relevant document chunks are included in the prompt sent to the AI model, along with the user's question. The AI generates its response using both its general training and the specific context from your documents.

Source citation: The system includes references to which documents it used, so users can verify the answer against the originals.

All of this happens in seconds, entirely on your hardware.

What RAG doesn't do

To be clear about the limitations:

- It doesn't replace human judgment. RAG surfaces relevant information and helps generate drafts. A qualified professional still needs to review the output.

- It's only as good as your data. If your documents are disorganized, incomplete, or outdated, the AI's answers will reflect that. The system works best when it has comprehensive, well-maintained source material.

- It doesn't learn from conversations. RAG retrieves from your document library — it doesn't automatically learn from every chat interaction. New knowledge needs to be added as documents.

Getting started with RAG

If you want to build a searchable knowledge base for your organization, the path is straightforward:

- AI Operations Audit ($3,500) — We assess your document library, identify the highest-value knowledge base candidates, and build a working RAG prototype using your actual documents. You see it running live on the delivery call.

- Deployment — The knowledge base is installed on your hardware, loaded with your documents, and configured for your team's workflows.

- Ongoing optimization — We continuously refine the retrieval quality based on how your team uses the system.

This is the "Institutional Memory" module in our deployment framework — and it's one of the most immediately impactful capabilities for any organization that has accumulated years of documents, decisions, and institutional knowledge.

Book a 15-minute call to discuss what a RAG-powered knowledge base would look like for your organization.

Related reading:

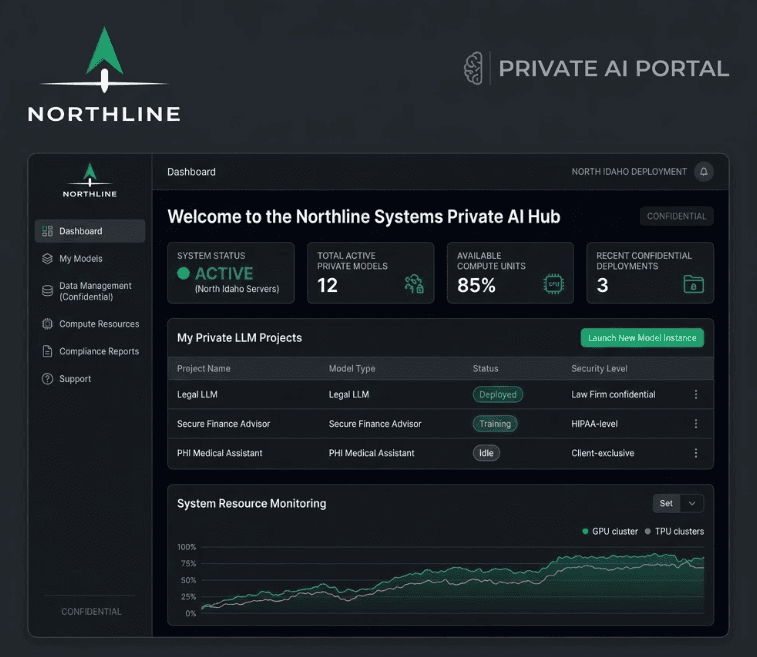

- What a Private AI Deployment Actually Looks Like

- Local AI Models vs ChatGPT: A Real-World Comparison

- Private AI for Law Firms — institutional memory is one of the highest-impact modules

- AI Operations Audit — What's Included

- The Hybrid Routing Approach to AI Privacy

Want to see what AI can do for your business?

Book a free 15-minute call. We'll tell you exactly what's automatable — and what isn't.

Schedule a 15-Minute Fit Call