The Hybrid Routing Approach to AI Privacy

You don't have to choose between AI quality and data privacy. Hybrid routing sends sensitive data to local models and everything else to cloud AI - automatically. Here's how it works.

The most common objection we hear from technical decision-makers evaluating private AI: "Local models aren't as good as GPT-4 or Claude. Why would I accept lower quality?"

Fair question. And the answer is: you don't have to.

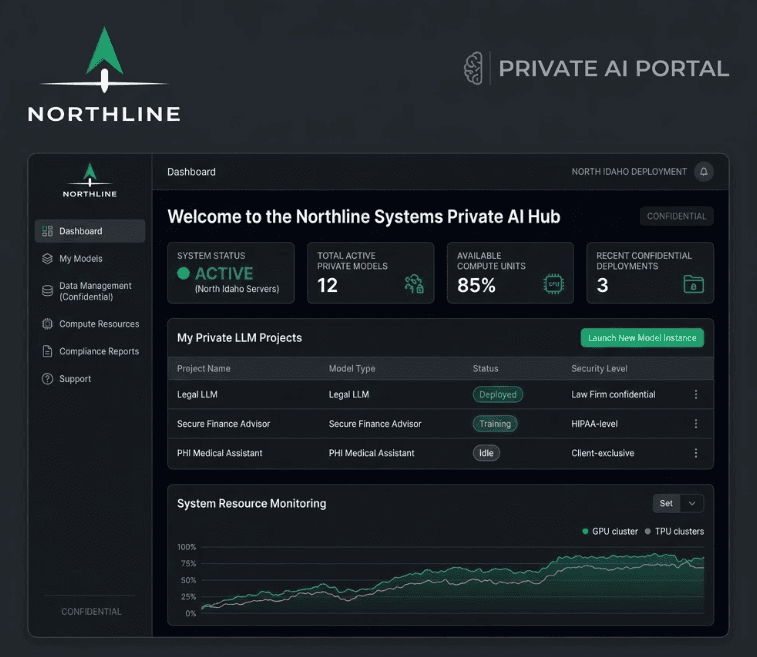

Hybrid routing gives you the privacy guarantees of on-premise AI for sensitive work and the quality advantages of cloud AI for everything else. Your team interacts with a single portal. The system handles the rest.

The binary choice is a false choice

Most AI conversations frame the decision as either/or:

- Cloud AI: Best quality, zero privacy

- Local AI: Full privacy, lower quality

This framing is wrong. Not all of your data carries the same sensitivity. A client's medical record and a general question about HIPAA filing deadlines are fundamentally different - one is PHI that can never leave your control, and the other is public information where cloud model quality is preferred.

Forcing both through the same pipeline - whether that's cloud or local - means you're either accepting unnecessary risk or unnecessary quality tradeoffs.

How hybrid routing works

The system classifies each request based on data sensitivity and routes it to the appropriate model:

Layer 1: Data classification

Every input is analyzed before processing. The classification considers:

- Does the input contain identifiable client/patient information? Names, case numbers, account numbers, medical record numbers.

- Does it reference privileged communications? Attorney-client discussions, medical consultations, financial advisory conversations.

- Does it contain regulated data? PHI, CUI, data covered by specific NDAs or compliance frameworks.

- Is the content commercially sensitive? Bid pricing, M&A strategies, proprietary processes.

If any of these triggers hit, the request routes locally. If none trigger, it routes to cloud.

Layer 2: Model selection

Local routing sends the request to the on-premise model (DeepSeek, Llama, or Mistral running on your Mac Mini). The data never leaves your hardware. Response time is typically 15-45 seconds depending on complexity.

Cloud routing sends the request to a cloud API (Claude or GPT-4) with appropriate data handling agreements in place. Response time is typically 3-10 seconds, and model quality is best-in-class for open-ended tasks.

Layer 3: Transparency

Every request is logged with its routing decision. Your team can see where each query was processed. Leadership gets monthly reports showing the breakdown of local vs. cloud processing, giving full visibility into data handling.

What this looks like in practice

Example: Law firm

| Task | Data sensitivity | Routes to | Why |

|---|---|---|---|

| Review client contract | Privileged | Local | Contains client data and privileged terms |

| Research commercial lease case law | Public | Cloud | Public legal information, better quality from GPT-4 |

| Draft engagement letter for new client | Privileged | Local | Contains client name, matter details |

| Summarize recent changes to Idaho LLC statute | Public | Cloud | Public legal information |

| Search firm's case history for similar matter | Privileged | Local | Queries internal privileged database |

Example: Medical practice

| Task | Data sensitivity | Routes to | Why |

|---|---|---|---|

| Draft SOAP note from provider dictation | PHI | Local | Contains patient clinical data |

| Look up drug interaction for two medications | Public | Cloud | Public pharmacological information |

| Process new patient intake form | PHI | Local | Contains patient PII and clinical history |

| Draft generic patient education handout | Public | Cloud | No patient-specific information |

| Generate prior authorization appeal | PHI | Local | Contains diagnosis, treatment, patient details |

Example: Construction firm

| Task | Data sensitivity | Routes to | Why |

|---|---|---|---|

| Analyze subcontractor bid pricing | Competitive | Local | Bid data is commercially sensitive |

| Research building code requirements | Public | Cloud | Public regulatory information |

| Draft RFI response for active project | Competitive | Local | Contains project-specific scope and pricing |

| Generate a generic safety meeting agenda | Public | Cloud | No project-specific data |

| Compare current bid against historical library | Competitive | Local | Proprietary pricing data |

The quality gap is smaller than you think

For the specific tasks that regulated businesses need most, local models perform close to cloud models:

- Document analysis and extraction: Local models are very good at reading a contract and pulling out specific clauses, dates, and obligations. This is a structured task with clear success criteria.

- Summarization: Condensing a 40-page document into a 2-page summary works well locally. The output may be slightly less polished than GPT-4, but the content accuracy is comparable.

- Classification: Categorizing documents, routing inquiries, and tagging data by type is a strength of local models.

- Template-based drafting: Generating documents from your own templates with variable inputs is a structured task where local models excel.

Where cloud models still win:

- Open-ended research and reasoning: Complex, multi-step analysis with no clear template benefits from the larger parameter counts of cloud models.

- Creative writing: Marketing copy, client-facing prose, and nuanced communication is better from GPT-4 or Claude.

- Novel problem-solving: Tasks the model hasn't seen patterns for benefit from larger training datasets.

The hybrid approach routes each task to wherever it'll be handled best. You get cloud quality where it matters and local privacy where it's required.

Implementation

The routing layer is built into the portal your team uses. There's no manual step. Your team doesn't select "local" or "cloud" for each request - the system handles it based on rules configured during deployment and refined during hypercare.

The classification rules are customizable. During the build phase, we configure them based on your specific data types, compliance requirements, and workflow patterns. During the 14-day hypercare period, we refine them based on real usage.

If the system is uncertain about a classification, it defaults to local. Privacy-first is the default behavior.

Why this matters for compliance

Hybrid routing gives you a defensible position:

- You can demonstrate that sensitive data is processed exclusively on local infrastructure. The logs prove it.

- You can show that your AI usage policy is enforced by architecture, not just by employee behavior. The routing layer is the enforcement mechanism.

- You can provide auditors with complete records of what data was processed where, when, and by whom.

This is stronger than any policy document alone. Policies rely on people following rules. Architecture enforces them automatically.

Getting started

The hybrid routing layer is included in every private AI deployment we build. It's not an add-on - it's a core component of the base platform.

The first step is understanding what data your organization handles and how it should be classified. That's part of the AI Operations Audit: $3,500, delivered in 3 business days, credited toward a build.

Book a 15-minute call to discuss whether hybrid routing makes sense for your operation.

Related reading:

Want to see what AI can do for your business?

Book a free 15-minute call. We'll tell you exactly what's automatable — and what isn't.

Schedule a 15-Minute Fit Call