Why Your Firm Should Stop Using ChatGPT for Client Work Today

A direct case for why professional services firms — law, medical, financial, construction — should immediately stop using consumer and enterprise cloud AI for client-facing work, and what to replace it with.

This isn't a scare piece. It's a risk assessment.

If your firm handles client data that's protected by privilege, regulation, NDA, or fiduciary duty — and your team is using ChatGPT, Claude, Gemini, or any cloud AI tool to process that data — you have an active, unmitigated compliance exposure right now.

Here's the situation, the risk, and the fix.

The Situation

A 2025 survey by Cyberhaven found that 11% of data employees paste into ChatGPT is confidential. Samsung banned ChatGPT company-wide after engineers pasted proprietary source code. Amazon warned employees after discovering confidential data in ChatGPT outputs. The pattern is consistent across industries.

Your team is almost certainly doing the same thing. Not maliciously — productively. They're:

- Pasting contract clauses to get AI summaries

- Uploading financial statements for analysis

- Asking ChatGPT to help draft client communications

- Processing intake forms through AI tools

- Using AI to research case-specific questions with identifying details

- Summarizing meeting notes that contain privileged information

Every one of these actions sends your client's data to a third-party server you don't control.

The Risk by Industry

Law Firms

Attorney-client privilege may be waived when privileged communications are transmitted to a third party without the client's informed consent. Cloud AI processing constitutes transmission to a third party. Multiple state bars have issued guidance requiring firms to evaluate AI tools for privilege implications. A malpractice claim based on privilege waiver through AI usage is not hypothetical — it's inevitable.

Your exposure: Disciplinary proceedings, malpractice claims, client loss, reputational damage.

Read more: Private AI for Law Firms

Medical Practices

HIPAA violations for unauthorized disclosure of PHI start at $100,000 per incident. When a billing specialist pastes patient information into ChatGPT, that's a potential unauthorized disclosure. A BAA with OpenAI doesn't eliminate this risk — it creates shared liability while still requiring a thorough risk assessment most practices haven't conducted.

Your exposure: $100K–$1.9M per violation category, OCR investigation, criminal penalties possible.

Read more: Private AI for Medical Practices

Financial Advisors

SEC Regulation S-P requires firms to protect client financial information. FINRA rules mandate supervision of electronic communications. AI tool usage with client data creates a new category of electronic communication that most compliance frameworks haven't addressed. The SEC has signaled increasing scrutiny of AI usage in financial services.

Your exposure: SEC enforcement action, FINRA sanctions, client lawsuits, E&O claims.

Read more: Private AI for Financial Advisors

Construction Firms

Your bid data is the most competitively sensitive information in your company. If a competitor sees your pricing, you lose every project. When an estimator uses ChatGPT to help analyze a bid package, that pricing data is on someone else's server. Cloud AI providers have had data exposure incidents. Your bid data in the wrong hands costs real projects and real revenue.

Your exposure: Loss of competitive advantage, contract violations, potential litigation from project owners.

Read more: Private AI for Construction

Government Contractors

CUI (Controlled Unclassified Information) processed through unauthorized cloud services is a CMMC compliance violation. Period. If your team processes CUI through any cloud AI tool, you're risking your government contracting eligibility.

Your exposure: Loss of contracting eligibility, CMMC certification failure, contract termination.

Read more: Private AI for Government Contractors

Why "Just Ban It" Doesn't Work

Many firms have tried the prohibition approach: "No one is allowed to use AI tools." This fails for three reasons:

Your team is already using AI. They started before you noticed, and a memo won't stop them. They'll use personal devices, personal accounts, and unofficial workarounds. You've just pushed AI usage underground where you can't see or control it.

Your competitors are using AI. The firm down the street is processing contracts in 30 seconds while your team spends 90 minutes. They're doing intake in 5 minutes while yours takes 45. Banning AI is a competitive disadvantage that compounds every month.

AI is the right tool. The productivity gains are real. Your team knows it. Banning the right tool because the wrong deployment model creates risk is solving the wrong problem.

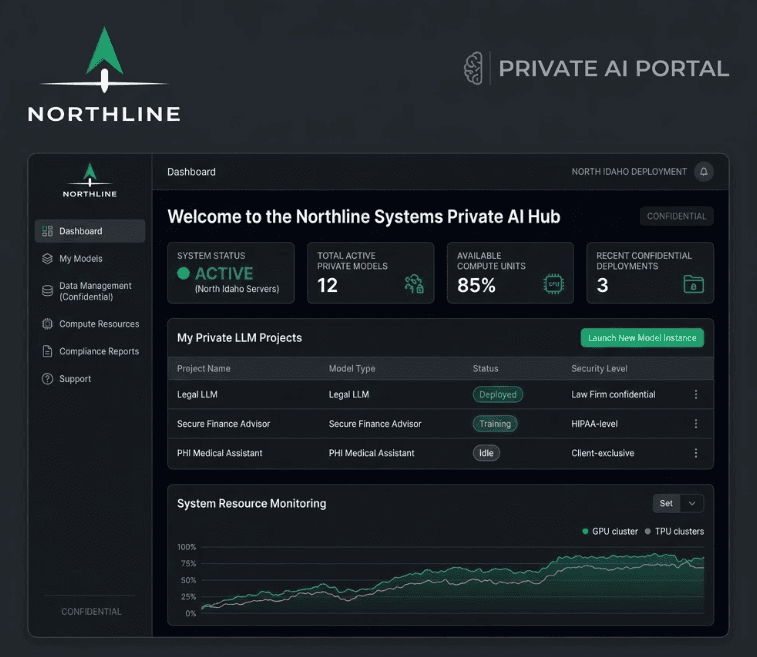

The Fix: Private AI Infrastructure

The solution isn't to stop using AI. It's to run AI on hardware you own, inside your building, where client data never touches an external server.

A private AI deployment gives your team:

- The same AI capabilities — document review, drafting, analysis, research, automation

- On hardware you control — a Mac Mini in your office running open-source models

- With hybrid routing — sensitive data stays local, general tasks route to cloud AI for quality

- Through one portal — your team uses a single web interface, routing is automatic

- Under your governance — audit logging, usage policies, access controls

The data handling model is identical to your existing technology: files on your server, print jobs on your printer, emails on your exchange server. The AI is new; the data posture is not.

What To Do This Week

Step 1: Find out what's happening

Ask your team what AI tools they're using and what data they're processing. Send an anonymous survey if needed. The answer will be worse than you expect, and that's actually good news — it means there's demand for AI tools, which makes adoption of a proper solution easier.

Step 2: Get an assessment

Our AI Operations Audit gives you a complete picture in 3 business days:

- Every AI tool your team currently uses

- What data is being exposed

- Data classification framework (what's sensitive, what's not)

- Written AI usage policy your team can adopt immediately

- System architecture for a private deployment

- Working prototype of your first automation

- Exact pricing for a full deployment

$3,500. 7-day money-back guarantee. Full fee credited toward your build.

Step 3: Deploy

Give your team the AI tools they clearly want — without the compliance risk. Typical deployment timeline: 1-2 weeks from audit to production.

The Math

For a 12-person professional services firm:

| Item | Annual Cost |

|---|---|

| Current risk exposure (one incident) | $100,000+ |

| ChatGPT Enterprise (alternative) | $8,640/year + compliance overhead |

| Private AI deployment (year 1) | ~$65,000–$70,000 |

| Private AI (year 2+) | ~$36,000/year |

| Time recovered (25 hrs/week at $250/hr) | $325,000/year in billable capacity |

The deployment pays for itself many times over — and eliminates the compliance risk entirely.

Book a 15-minute call to discuss your firm's specific situation. No pitch, no pressure — just a conversation about whether private AI makes sense for your operation.

Related reading:

Want to see what AI can do for your business?

Book a free 15-minute call. We'll tell you exactly what's automatable — and what isn't.

Schedule a 15-Minute Fit Call