Why Your Team Is Already Putting Sensitive Data Into AI (And What to Do About It)

Your employees are using ChatGPT, Claude, and Gemini right now — probably with client data. Here's why that's a bigger risk than you think, and how to get ahead of it before it becomes a compliance nightmare.

Here's something most business owners don't know: your team is already using AI tools at work. They're pasting client emails into ChatGPT to draft responses. They're uploading contracts to Claude to summarize terms. They're feeding customer data into AI assistants to generate reports faster.

They're not doing it to be reckless. They're doing it because it works — and nobody told them not to.

The shadow AI problem

"Shadow AI" is the enterprise term for unauthorized AI tool usage within an organization. Unlike shadow IT from the 2010s (employees installing Dropbox without approval), shadow AI is harder to detect and carries higher stakes.

Why? Because the data being shared isn't just files — it's the contents of those files. Client names. Medical records. Financial details. Legal strategies. Proprietary business information.

When an employee pastes a client's medical intake form into ChatGPT to help draft a treatment plan summary, that data has now left your organization's control entirely.

What's actually at risk

For law firms

Attorney-client privilege is the foundation of legal practice. If a paralegal uses an AI tool to summarize case notes — and that data is stored, logged, or used to train models — the privilege argument becomes significantly harder to defend. One opposing counsel subpoena for AI tool usage logs, and the case gets complicated fast.

For medical practices

HIPAA violations carry penalties of $100 to $1.9 million per incident, with annual maximums of $50,000 to $1.9 million per violation category. If patient data is processed by an AI tool that isn't covered under a Business Associate Agreement (BAA), you have a reportable breach.

For financial advisors

Client financial data shared with AI tools can violate SEC and FINRA regulations around data handling and client confidentiality. The regulatory environment is tightening, not loosening.

For government contractors

If your team handles CUI (Controlled Unclassified Information) and that data enters a commercial AI tool, you may be in violation of NIST 800-171 and CMMC requirements. This can disqualify you from future contracts.

For any business with NDAs

If you've signed an NDA with a client and your team processes their data through a third-party AI tool, you may be in breach of that agreement. Most NDAs were written before AI tools existed and don't explicitly address this scenario — which doesn't protect you; it exposes you.

Why this is happening now

Three factors converged:

AI tools are free and frictionless. ChatGPT doesn't require IT approval. There's no procurement process. Anyone with a browser can use it.

The productivity gains are real. Employees using AI tools are genuinely faster. They're not going to stop voluntarily — the incentive is too strong.

Most organizations have no AI usage policy. Without clear guidelines, employees make their own judgment calls. And their judgment is: "This saves me two hours, and nobody said I couldn't."

What to do about it

Banning AI tools outright doesn't work. Your team will use them anyway — just more quietly. The productive approach is threefold:

1. Audit current usage

Find out what tools your team is actually using, what data they're processing through them, and how frequently. This isn't about blame — it's about understanding your current exposure. Most organizations are surprised by the findings.

2. Classify your data

Not all data carries the same risk. A marketing email draft in ChatGPT is different from a client's medical record. Establish clear tiers:

- Public: Safe for any AI tool (marketing copy, general research)

- Internal: Use only approved AI tools with enterprise agreements (internal processes, non-client data)

- Confidential: Must stay on infrastructure you control (client data, financial records, legal documents)

- Restricted: No AI processing permitted (PHI, CUI, data under active NDA)

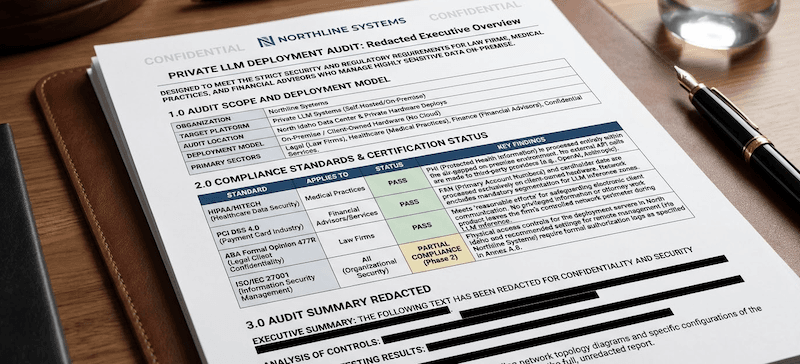

3. Deploy the right infrastructure

For confidential and restricted data, the answer isn't "don't use AI." It's "use AI on infrastructure you control." Local LLM deployments, self-hosted models, and API configurations with data processing agreements give your team the productivity benefits without the data exposure.

4. Write and enforce a policy

An AI usage policy isn't a nice-to-have anymore. It's the document that protects you when (not if) a data handling question comes up. It should cover:

- Approved tools and their appropriate use cases

- Data classification requirements for AI processing

- Prohibited actions (e.g., never paste client PII into a consumer AI tool)

- Reporting requirements for accidental exposure

- Consequences for policy violations

The cost of doing nothing vs. doing something

| Risk | Potential Cost |

|---|---|

| HIPAA violation | $100K – $1.9M per incident |

| NDA breach lawsuit | Often six figures or more |

| Client data exposure | Unquantifiable reputational damage |

| Regulatory non-compliance | Personal legal exposure |

| AI Operations Audit | $3,500 to know exactly where you stand |

The audit takes 3 business days. You get a complete picture of your current AI tool exposure, a data classification framework, a security assessment, and a written AI usage policy your team can adopt immediately.

If this sounds relevant to your organization, book a 15-minute call and we'll walk through what the assessment covers.

Related reading:

Want to see what AI can do for your business?

Book a free 15-minute call. We'll tell you exactly what's automatable — and what isn't.

Schedule a 15-Minute Fit Call